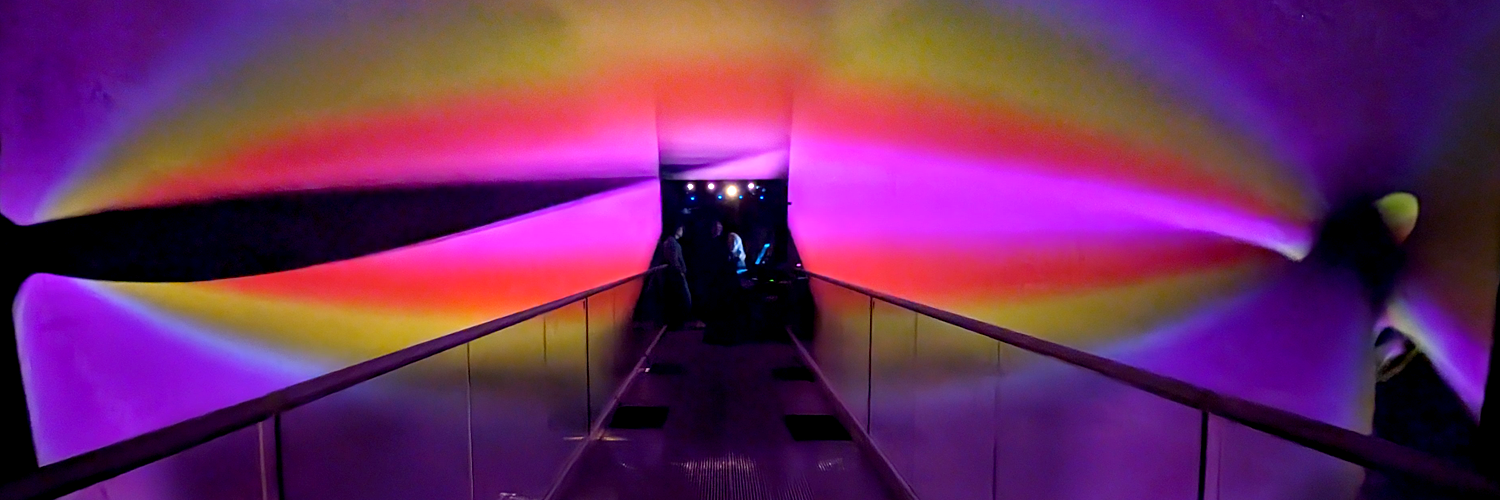

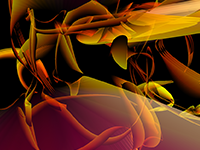

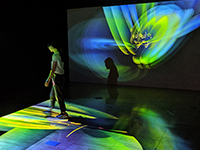

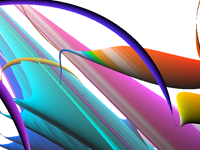

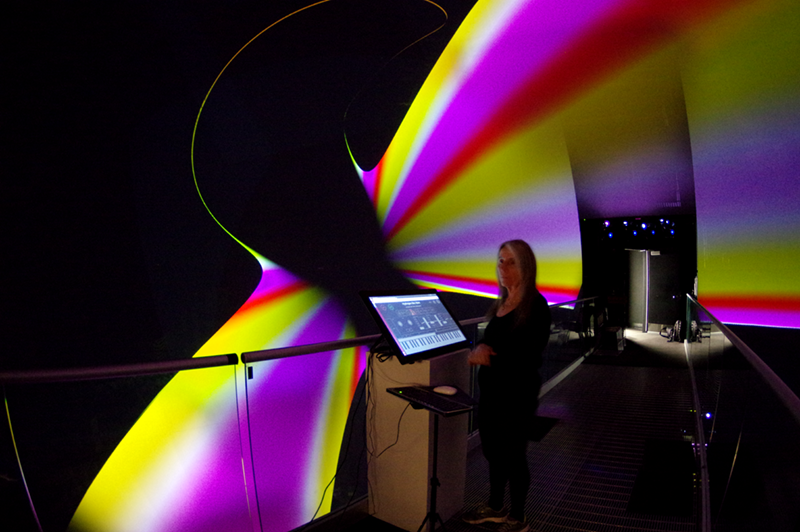

Atom inside the AlloSphere

Atom inside the AlloSphere

Atom inside the AlloSphere

Dr. Kuchera-Morin is Director and Chief Scientist of the AlloSphere Research Facility http://www.allosphere.ucsb.edu/ and Professor of Media Arts and Technology and Music, in the California NanoSystems Institute at the University of California, Santa Barbara (UCSB). Her research focuses on creative computational systems, content, and facilities design.

Her 40 years of experience in digital media research led to the creation of a multi-million dollar sponsored research program for the University of California—the Digital Media Innovation Program. She was Chief Scientist of the Program from 1998 to 2003. The culmination of her creativity and research is the AlloSphere, a 30-foot diameter, 3-story high metal cylinder inside an echo-free cube, designed for immersive/interactive scientific/artistic investigation of multi-dimensional data sets.

Atom inside the AlloSphere

Atom inside the AlloSphere

Atom inside the AlloSphere