Message from the Director Learn more

Dr. JoAnn Kuchera-Morin, chief designer of the facility, composer, and media artist with over thirty filve years of experience in media systems engineering, outlines the vision for our research.

MYRIOI Link Here

Welcome SIGGRAPH 2020 Community. All Packages and Media are available.

About Learn more

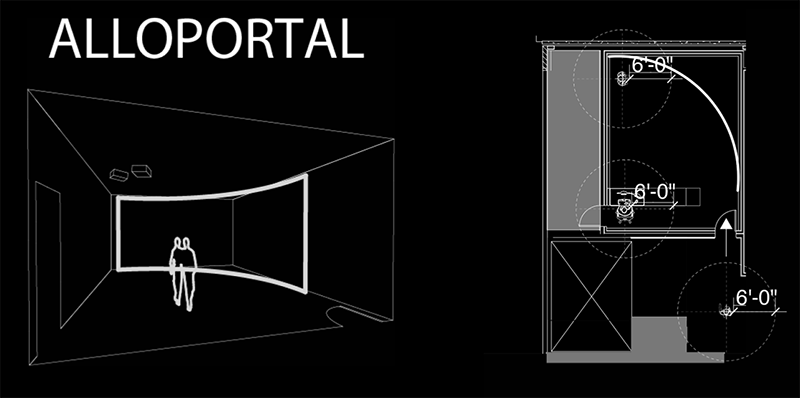

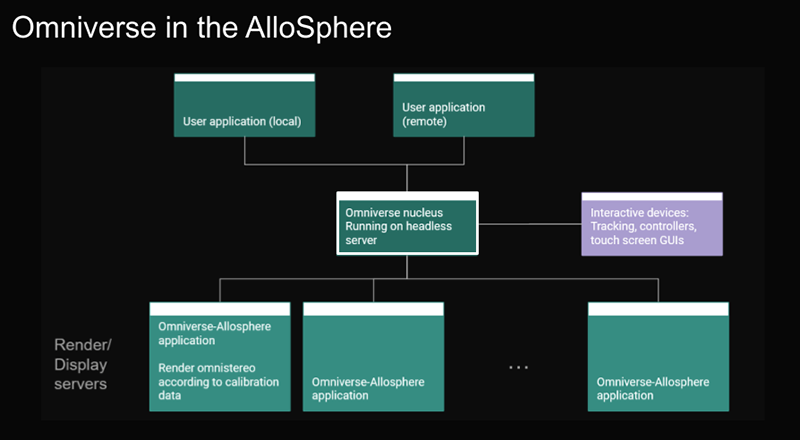

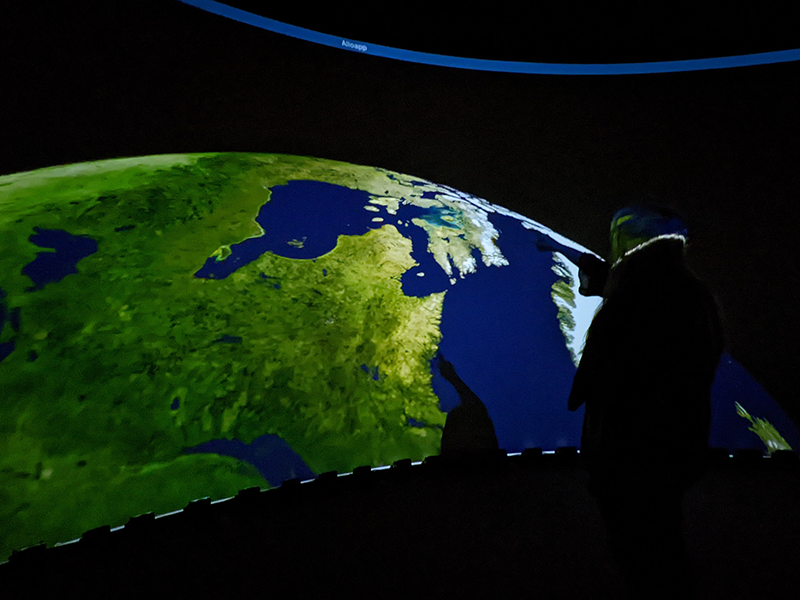

The AlloSphere Research Facility located in the California NanoSystems Insitute. Please inquire with Dr. JoAnn Kuchera-Morin for more information.

News Learn more

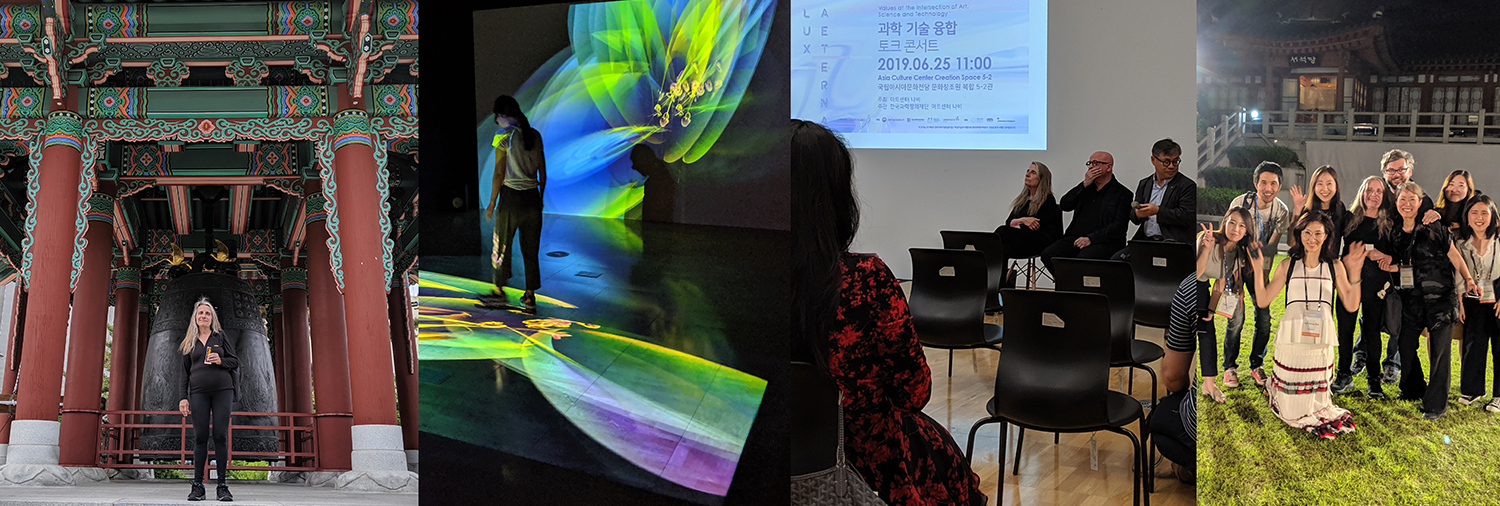

The latest news on the AlloSphere Team & Dr. JoAnn Kuchera-Morin's Exhibitions, Lectures, & Research.

ResearchProjects Here

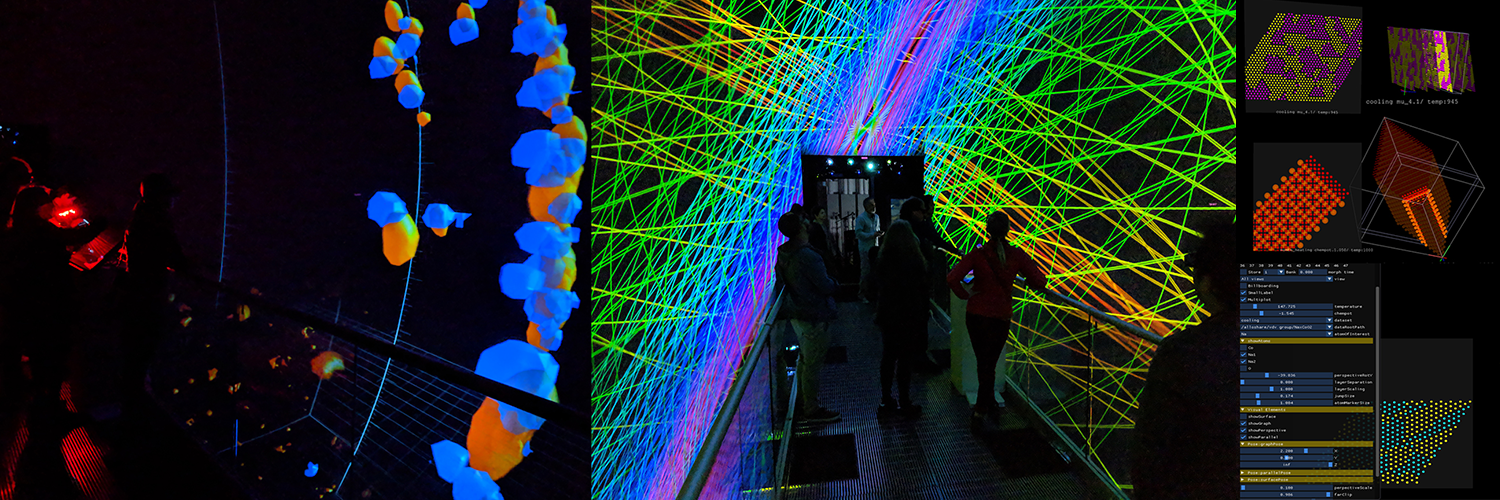

Etherial: A Quantum Composition & Installation featured at ISEA, Gwangju, Republic of Korea. Also can be viewed at the California NanoSystems Institute's AlloPortal and AlloSphere.

PublicationsRead more

A repository of publications in the Media Arts & Sciences. Please contact Dr. Kuchera-Morin for more information.

Software Download Here

Researcher Dr. Basak Alper demonstrates new ways of interacting with graph visualizations in the AlloSphere.

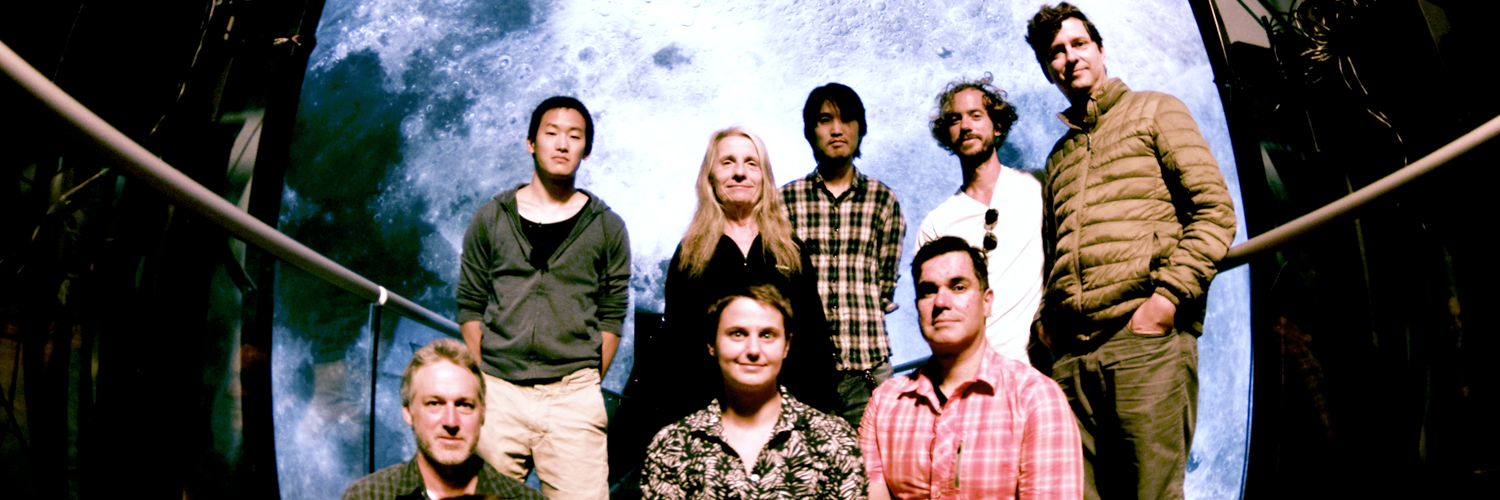

The AlloSphere Research Group Our Team

Our Team is led by Dr. Kuchera-Morin (Director, Inventor, & Lead Researcher) of the Allosphere Research Group. Dr. Andres Cabrera as Media Systems Engineer, Dennis Adderton as Technical Director. And the AlloSphere Team.

Collaborate with us

The AlloSphere Research Group is open to collaboration and tours.

Contact Dr. Kuchera-Morin

Dr. JoAnn Kuchera-Morin Group is available to discuss future collaborations.